Conditions and Expectations for Accepting AI-Based Design Feedback: An Exploratory Study Based on Interviews with Professional UI Designers

1 Overview

- An interview-based study exploring how AI-generated design feedback can genuinely support the thinking and decision-making of practicing UI designers, and what conditions make that feedback acceptable, along with directions for future system design.

- Conducted in-depth remote interviews with 13 working UI designers, thematically analyzed approximately 21 hours of recorded sessions, and synthesized the value, limitations, expectations, and concerns around AI feedback in real-world practice.

- Role: Lead researcher, end-to-end interview research

2 Context

As AI technologies, including large language models, have grown increasingly capable, their potential in the GUI design space has expanded, particularly in rule-based areas such as error detection, heuristic evaluation, and basic visual review. But there is a meaningful gap between what is technically possible and what practicing designers actually find useful. This project was born out of that gap. The goal was to understand, through the lived experience of working designers, how far AI can go in supporting professional design judgment, under what conditions its feedback would be accepted, and what limitations currently make it difficult to apply in practice.

Real-world design feedback rarely stops at flagging alignment issues or style errors. Actual design decisions involve layered judgment, balancing user experience, brand context, screen-to-screen flow, functional requirements, consistency, development constraints, and organizational standards all at once. This means AI design feedback cannot be approached purely as a static evaluation tool; it needs to be designed with an understanding of the context and constraints of real practice.

3 Approach

The study was designed to surface both the actual decision-making moments in professional UI design and the conditions that make feedback useful at those moments.

Interviews were structured around five core themes: current UI design workflows, design thinking and decision-making experiences, feedback in professional practice, experiences using AI tools in UI design work, and responses to a UI generation tool demo and future-scenario prompts, exploring both present and future possibilities for human-AI collaboration. Rather than simply asking “is AI useful?”, the goal was to understand structurally when and where designers find judgment most difficult, and what forms of feedback genuinely help.

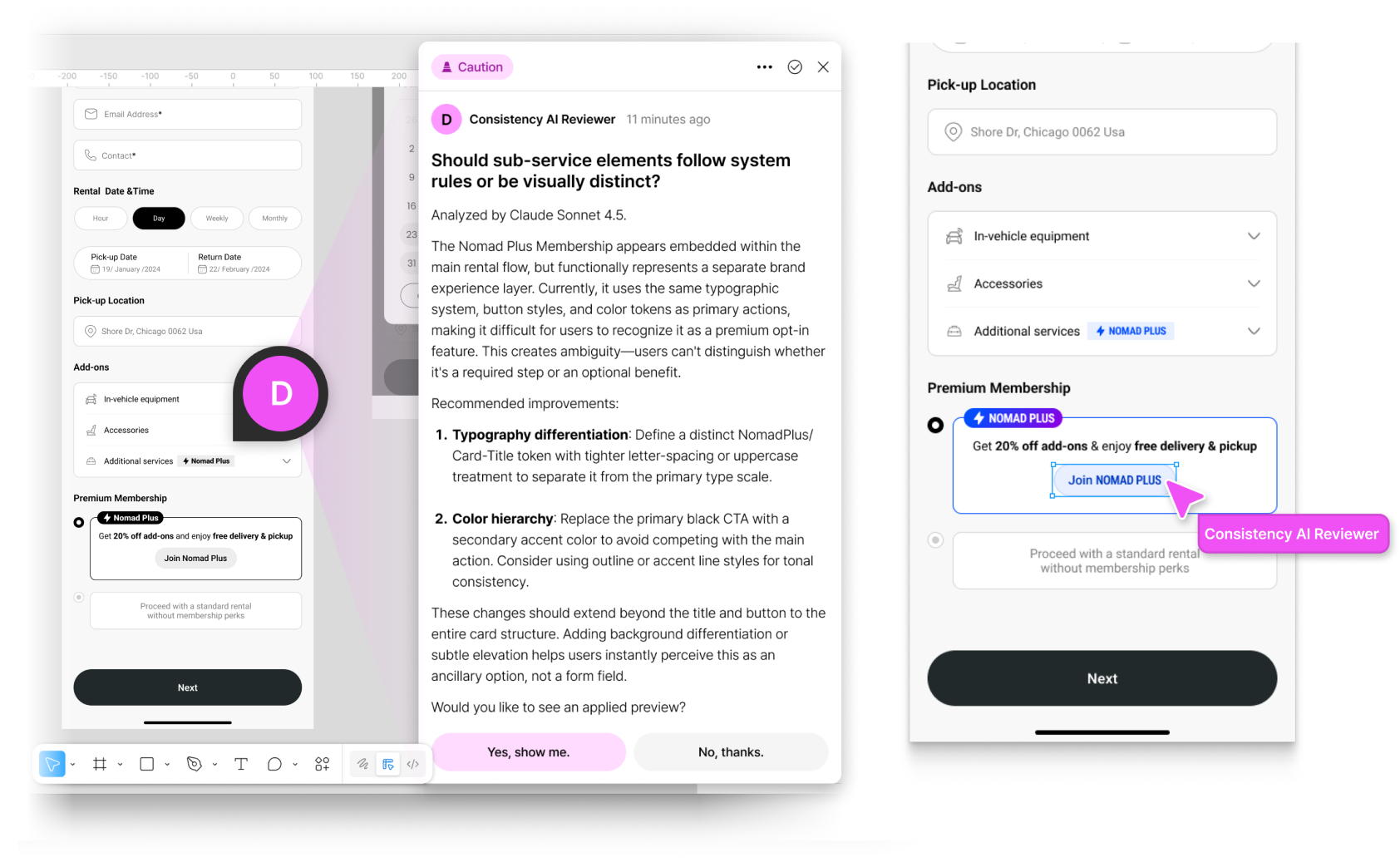

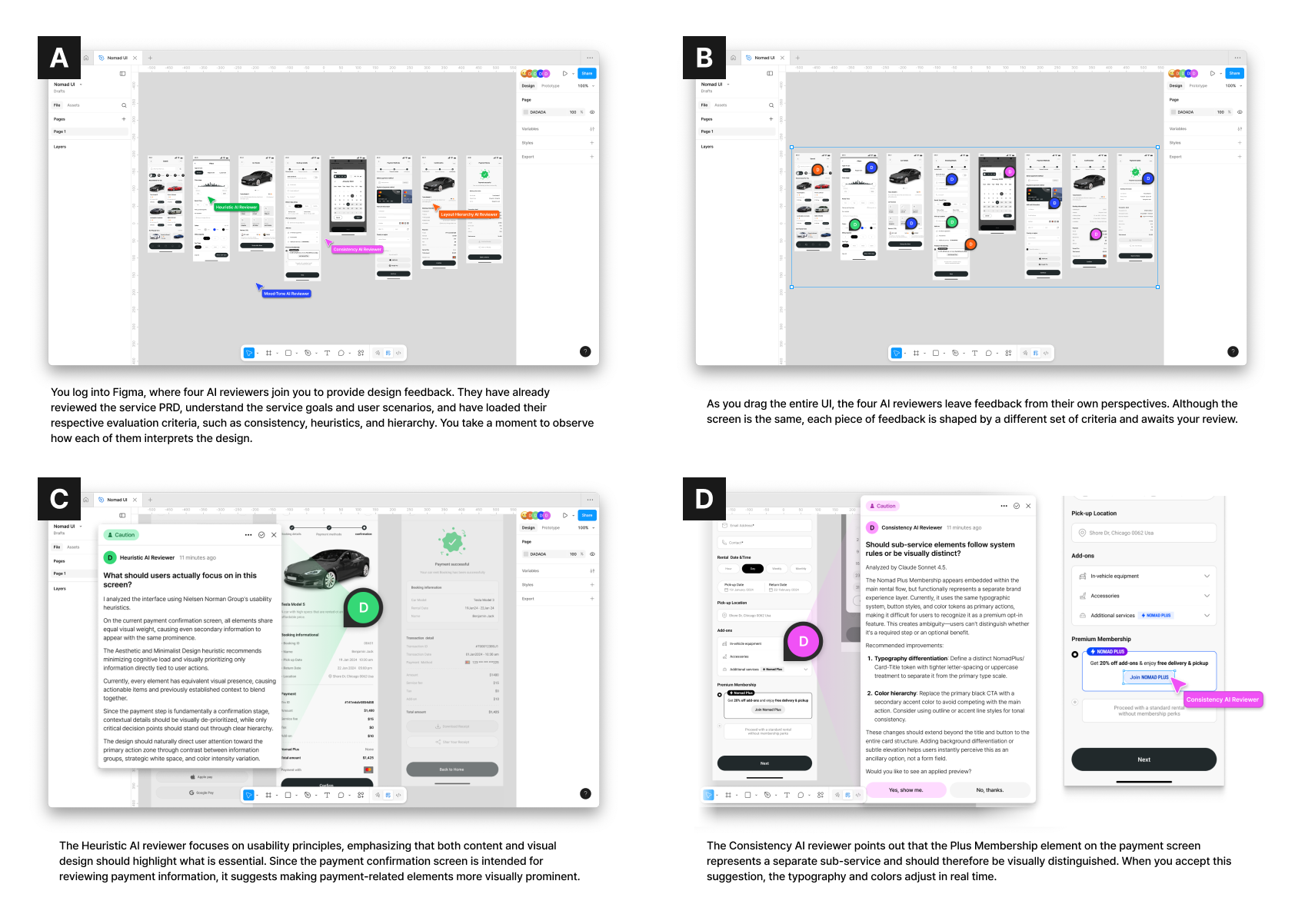

To anchor the interviews in concrete professional context, supplementary materials were developed. Before each session, participants completed a worksheet to map out their organization’s UI design process and workflow. A shared demo video of a current UI generation tool was shown to establish a common baseline understanding of today’s AI capabilities. A separate future-scenario document was also provided to help participants imagine a collaborative environment where AI gives and receives design feedback, with scenarios including working alongside AIs with different areas of expertise, and receiving both general evaluations and feedback directly actionable in practice.

Participants were 13 working UI designers who were currently using or had experimented with AI in their design practice. Interviews were conducted remotely via Zoom between October 21 and November 12, 2025, each lasting approximately 90 minutes. Participants were recruited through online designer communities, UI designer networks, and referrals. Their work contexts included web applications (9), mobile applications (8), and hardware product UI (1), and the majority reported using AI in their work frequently or consistently.

Analysis was based on approximately 21 hours (1,262 minutes) of recorded interview data. Key terms were extracted from interview notes to generate initial code candidates; two researchers collaboratively agreed on a first-pass code set, which was then refined by matching codes against transcripts and direct quotes. Unnecessary codes were removed and meanings were redefined to produce a final code list. A total of 192 codes were clustered using affinity mapping to surface overarching themes and key insights.

4 Outcome

4-1 Key Findings

Decision-making in professional UI design is not simply about refining a single screen. It is a complex, multi-constraint judgment process that involves simultaneously managing new possibilities and competing limitations.

Designers described their judgment as particularly demanding in the following situations:

- When exploring novel design approaches with few established references

- When accounting for a wide range of use cases and edge cases at once

- When determining the right design direction based on information hierarchy and visual weight

- When finding the best solution within real-world constraints such as timeline, team capacity, and technical feasibility

- When defining and maintaining a design system over time

Useful feedback in practice is not just evaluation. It has value when it is structured to help designers make better judgments.

The conditions for good feedback that emerged from the interviews include:

- Logical rationale: explaining why something is a problem, not just that it is

- Perspective that expands thinking: surfacing possibilities the designer hadn’t yet considered

- Actionable alternatives: pointing toward a next step that can actually be taken

- Reflection of project context: grounded in an understanding of the specific service and its goals

- Awareness of technical constraints: accounting for the development environment and implementation feasibility

- Respect for designer intent: acknowledging the reasoning behind existing decisions

- Questions that prompt articulation: prompting designers to revisit and re-examine their own rationale

Current AI feedback showed clear strengths in quick first-pass review and low-stakes interaction, but was consistently limited in its ability to reflect the full context of real practice.

Strengths

- Low barrier to asking for input, and easy to ignore if the feedback isn’t useful

- Flat interaction structure that makes pushback and follow-up questions feel natural

- Efficient for checking against defined standards or guidelines

- Helpful for quickly gaining a basic user-perspective read

Limitations

- Unable to reflect project-specific design systems and service policies

- Does not produce outputs that are immediately usable in professional workflows

- Tends toward surface-level, formulaic responses

- Still requires human validation before acting on

- Text-based feedback doesn’t map naturally onto the visual nature of design work

4-2 Design Implications for AI Feedback Systems

-

Effective AI design feedback must support designer judgment from a position of understanding the designer’s intent and the project’s context.

For feedback to be accepted as actionable in real practice, AI needs to account for the design system, service policies, project goals, current stage of work, target users, surrounding screen flow, development environment and technical constraints, and cultural context. AI should be designed not as a generic evaluator presenting universal right answers, but as a judgment-support tool that operates within the specific conditions of a given project.

-

The format of feedback needs to shift away from evaluation-centered language and toward alternatives, visuals, and the practical vocabulary of design work.

Designers found it more useful when feedback was delivered in concise, immediately graspable language rather than abstract explanations, with visual examples comparing as-is and to-be states, clearly identified targets, and concrete alternative directions they could act on right away. Feedback framed as questions, prompting designers to articulate their intent, functioned as a way of helping them re-examine and clarify their own reasoning.

-

In terms of interaction structure, a bidirectional, collaborative model that keeps control firmly in the designer’s hands was consistently identified as essential.

Designers preferred structures where they could set the focus and scope of feedback themselves, explain their intent, and push back on or question the AI’s reasoning, rather than receiving one-directional evaluations. Modalities that allow designers to select specific areas within their design tool and request feedback on only what they need, operating unobtrusively without interrupting the flow of work, were seen as more appropriate.

-

Building trust requires that the AI’s understanding of context and the basis for its judgments be made visible.

Participants reported higher confidence in AI feedback when it first showed how it understood the current situation, or explained what materials and criteria it used to form its assessment. Trust was found to stem less from tone and more from demonstrated contextual understanding, process transparency, and the quality of the output itself.

4-3 Expected Changes in Practice

-

The adoption of AI design feedback is likely to reduce repetitive review work and accelerate early exploration and overall productivity.

If AI handles consistency checks, first-pass reviews, and rapid exploration of alternatives, it could meaningfully reduce the time and resources spent on those tasks. Particularly in under-resourced environments or situations where peer feedback is hard to come by, AI was seen as potentially filling something close to the role of a junior design collaborator.

-

At the same time, the importance of final judgment and higher-order decision-making is expected to become more, not less, pronounced.

Reading the overall arc of a user journey, balancing context with visual quality and information delivery, and making final calls on the direction of a design were consistently identified as responsibilities that will remain at the core of the designer’s role. There was a strong sense that the designer’s work is shifting toward greater emphasis on strategic judgment and ultimate accountability for quality, rather than execution alone.

-

However, concerns also surfaced about over-reliance on AI weakening critical thinking or gradually encroaching on the designer’s role.

If designers repeatedly follow AI-generated suggestions without engaging critically, the scope of their exploration and the development of their own judgment may narrow over time. For this reason, AI design feedback needs to be designed not as automation that replaces thinking, but as a system that expands and stress-tests the designer’s own judgment.

5 Reflection

- This project reframed AI design feedback, not as an evaluator delivering right answers, but as a support system that extends and validates designer judgment. It became clear that AI feedback can only have real practical value when it goes beyond static assessment and provides material that reflects context, intent, and constraints together. The research also confirmed that good feedback, for AI as for humans, is accepted in practice when it carries structure that combines rationale, context, and alternatives. AI needs to be designed not to replace the designer’s thinking, but to broaden it and prompt re-examination.

- At the same time, this study remains at a stage where real-world applicability still needs to be tested more rigorously. With a sample of 13 interview participants, the findings are not readily generalizable across the industry as a whole. And because the research is interview-based, it does not behaviorally or quantitatively verify whether AI feedback actually improves design quality or decision-making outcomes in practice. Next steps would involve expanding the sample to include a wider range of organization sizes and domains, conducting task-based experiments, and running quantitative studies to empirically validate the effects of AI design feedback.